The need for efficient text summarization has never been more pressing. Whether you’re a student grappling with lengthy research papers or a professional navigating news articles, the ability to extract key insights quickly is invaluable. T5, a pre-trained language model famous for several NLP tasks, excels at text summarization. Text summarization using T5 is seamless with the Hugging Face API. However, fine-tuning T5 for text summarization can unlock many new capabilities. That’s exactly what we will discover in this article.

We will cover the following topics in this article:

- Firstly, we carry out text summarization using T5 from Hugging Face. This will provide us with its strengths and limitations.

- Secondly, we will fine-tune the T5 model on the BBC news summarization dataset. Running inference using the trained model will reveal the difference that fine-tuning on task specific datasets can make.

- Finally, we will build a local Gradio app for text summarization using T5.

Be sure to check the final results by clicking on this link. We will slowly unravel the potential of T5 for Text Summarization.

Why Text Summarization Matters?

In today’s world, while data and information are abundant, humans are busier than ever. In fact, people prefer to read summarized news more as compared to reading a full-fledged article. This is where apps like Inshorts become valuable. It can condense long form news articles into 60 word summaries which one can easily consume while gaining key insights.

Although we will not be building a state-of-the-art app like Inshorts here, this can be a vital step in your project where you can build your own news summarizer. Perhaps you are a student who reads a lot of research papers. Creating a paper summarizer could make skimming through research papers easier. The possibilities are endless with text summarization using T5.

Without further ado, let’s dive into the technical details of the article.

Text Summarization using Pretrained T5 Model

Let’s start with using the pretrained T5 model from the Hugging Face Transformers library for text summarization.

You can access both the pretrained inference notebook and the fine-tuning notebook via the download section.

Starting with the necessary imports.

from transformers import T5Tokenizer, T5ForConditionalGeneration

import glob

import pprint

pp = pprint.PrettyPrinter()

As we are doing text summarization using T5, the tokenizer and model class have to match. The next step is to initialize the tokenizer and the model.

tokenizer = T5Tokenizer.from_pretrained('t5-base')

model = T5ForConditionalGeneration.from_pretrained('t5-base')

We are loading the T5 base model here.

The next code block defines a summarize_text function.

def summarize_text(text, model, tokenizer, max_length=512, num_beams=5):

# Preprocess the text

inputs = tokenizer.encode(

"summarize: " + text,

return_tensors='pt',

max_length=max_length,

truncation=True

)

# Generate the summary

summary_ids = model.generate(

inputs,

max_length=50,

num_beams=num_beams,

# early_stopping=True,

)

# Decode and return the summary

return tokenizer.decode(summary_ids[0], skip_special_tokens=True)

There is one important point to note here. We are prepending the input text with “summarize: ”. Why do we need that?

Going over the previous fine-tuning T5 article, you will find that the T5 model can perform several tasks, including text summarization. Each task is triggered with a special token and each token corresponds to a special prepended string. For text summarization, it is the above string.

We truncate the entire input article after 512 tokens, and decode the generated IDs from the model.

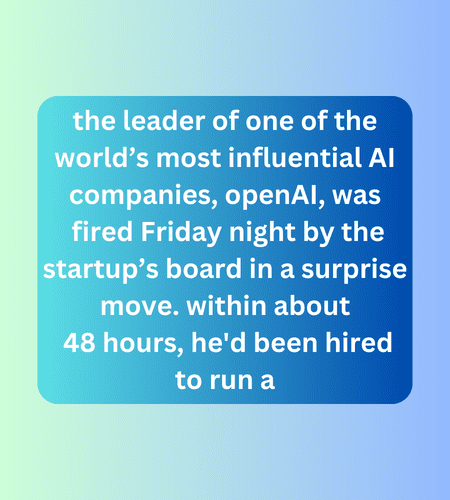

Here is a simple for loop going over a few text files in the inference_data directory. The news articles cover the recent infamous ousting of Sam Altman from OpenAI. Let’s check how well the pretrained T5 model can summarize the news for us.

for file_path in glob.glob('inference_data/*.txt'):

file = open(file_path)

text = file.read()

summary = summarize_text(text, model, tokenizer)

pp.pprint(summary)

print('-'*75)

Here are the results.

Although the text is shortened, we can right away figure out some inconsistencies. It seems the model just ripped off some sentences from the mid-article and joined them. The ending also seems off.

So, what will it take to build a much better summarizer? This is where fine-tuning T5 for text summarization comes in – Because of T5’s capability of abstractive summarization.

So, instead of just extracting words (extractive summarization) to create the summary, it can add new words to build cohesive sentences.

Are you new to Huggin Face and NLP? If yes, then do not miss reading the following articles on BERT to build a foundation with Hugging Face NLP.

- BERT: Bidirectional Encoder Representations from Transformers

- Fine-Tuning BERT using Hugging Face Transformers

Text Summarization using T5 – Training for Better Summarization

Training any Transformer model for text summarization can be a long and daunting task. However, the Hugging Face libraries make the process extremely easy.

The BBC News Summarization Dataset

To begin with, let’s talk about the dataset we will be using. The BBC news dataset is an extractive news summary dataset. It contains around 2200 news samples and summaries across different domains that include:

- Sport

- Business

- Politics

- Entertainment

- Tech

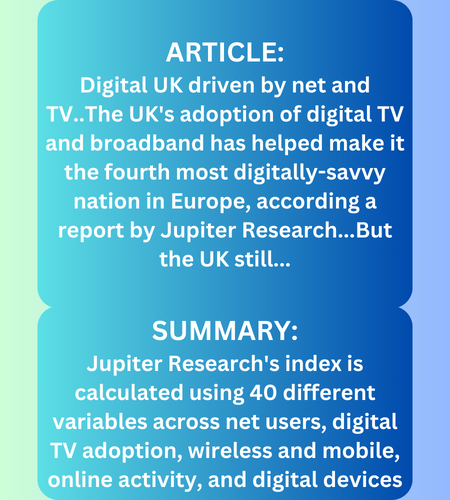

Although the sample count is not too high, it covers a wide range of text. For example, the following is a sample from the politics section of the dataset along with its summary.

The original article is truncated in the above image. It is evident that the sample summaries are concise and meaningful. Such a well managed dataset can help train a better summarization model than a large and ill-managed dataset.

One downside of using an extractive summarization dataset to train the T5 model is in the originality of the summarization. Although T5 is a generative encoder-decoder Transformer model, when we train on an extractive summarization dataset, the model will only learn to extract the sentences from the original dataset to form the final summary. Still, the fine-tuned T5 model for summarization should be fairly superior compared to the pretrained one.

Set Up and Installation of Dependencies

The first step that we need to do is install the dependencies that we need for training the text summarization T5 model.

!pip install -U transformers

!pip install -U datasets

!pip install tensorboard

!pip install sentencepiece

!pip install accelerate

!pip install evaluate

!pip install rouge_score

Managing Imports

Next comes importing all the important libraries and modules.

import torch

import pprint

import evaluate

import numpy as np

from transformers import (

T5Tokenizer,

T5ForConditionalGeneration,

TrainingArguments,

Trainer

)

from datasets import load_dataset

There are a few important libraries that we need to focus on from the installation and import commands:

evaluate: Theevaluatelibraries helps us quickly evaluate transformer models from the Hugging Face library for different tasks. It can be text classification, question answering, and even text summarization.rouge_score: Text summarization is primarily evaluated through Rouge score. To load the Rouge score metric code using the evaluate library, we need to install it although there isn’t any need to import it separately. We will get into the details of the Rouge score later in the article.

Preparing the BBC News Summarization Dataset

The BBC News summarization dataset is available through the Hugging Face datasets library for seamless loading.

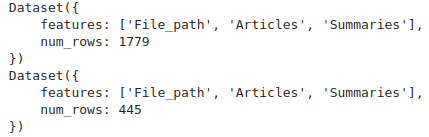

Let’s load the dataset, shuffle it, and create the training and validation splits.

dataset = load_dataset('gopalkalpande/bbc-news-summary', split='train')

full_dataset = dataset.train_test_split(test_size=0.2, shuffle=True)

dataset_train = full_dataset['train']

dataset_valid = full_dataset['test']

print(dataset_train)

print(dataset_valid)

We are using 80% of the samples for training and the rest for validation. The final training and validation splits are stored as dictionaries in dataset_train and dataset_valid.

There are 1779 samples in the training set and 445 samples in the validation set.

Dataset Analysis

The downloadable notebook contains additional code for dataset analysis. From the analysis, we infer the following:

- There is just one article above 4000 words and 356 articles above 500 words.

- Nearly all summaries are below 200 words.

- The average length of the articles is around 384 words.

This information will be useful when tokenizing the dataset.

Training and Data Configurations

We need to set some basic configurations for the training and dataset preparation pipeline.

MODEL = 't5-base'

BATCH_SIZE = 4

NUM_PROCS = 4

EPOCHS = 10

OUT_DIR = 'results_t5base'

MAX_LENGTH = 512 # Maximum context length to consider while preparing dataset.

We choose to fine-tune the t5-base model. The batch size is 4 and the number of processes used for parallel processing is 4 as well. We will train for 10 epochs, and the maximum context length of the articles will be 512. Remember that the average length of the articles is 384 words. Hence, any articles below 512 tokens will be padded, and any above 512 tokens will be truncated. This is the right size for this dataset.

The model was fine-tuned on a system with RTX 4090 GPU with 24 GB VRAM. You may adjust the above hyperparameters based on the system you are training on.

Tokenizing the Dataset

Tokenizing means converting a word into a numerical value. Sometimes a single word may be broken down into multiple ones. Following this rule, each word ~= 1.3 tokens.

The following block contains the code preprocessing and tokenization.

tokenizer = T5Tokenizer.from_pretrained(MODEL)

# Function to convert text data into model inputs and targets

def preprocess_function(examples):

inputs = [f"summarize: {article}" for article in examples['Articles']]

model_inputs = tokenizer(

inputs,

max_length=MAX_LENGTH,

truncation=True,

padding='max_length'

)

# Set up the tokenizer for targets

targets = [summary for summary in examples['Summaries']]

with tokenizer.as_target_tokenizer():

labels = tokenizer(

targets,

max_length=MAX_LENGTH,

truncation=True,

padding='max_length'

)

model_inputs["labels"] = labels["input_ids"]

return model_inputs

# Apply the function to the whole dataset

tokenized_train = dataset_train.map(

preprocess_function,

batched=True,

num_proc=NUM_PROCS

)

tokenized_valid = dataset_valid.map(

preprocess_function,

batched=True,

num_proc=NUM_PROCS

)

First, the T5 Tokenizer is loaded followed by the process function.

Note that for each input article, we again prepend the “summarize: ” text. This will act as the trigger token and the model will learn to summarize the article that goes into the labels.

The tokenized datasets are stored in tokenized_train and tokenized_valid respectively.

Initializing the Model

It’s straightforward to load the model from the transformers library.

model = T5ForConditionalGeneration.from_pretrained(MODEL)

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model.to(device)

# Total parameters and trainable parameters.

total_params = sum(p.numel() for p in model.parameters())

print(f"{total_params:,} total parameters.")

total_trainable_params = sum(

p.numel() for p in model.parameters() if p.requires_grad)

print(f"{total_trainable_params:,} training parameters.")

We load the T5 Base model and move it to the computation device. The T5 Base model contains around 223 million parameters. It may look like a large model but it works much better compared to the T5 Small model.

Defining the ROUGE Score Metric

ROUGE score is one of the most common metrics for evaluating deep learning based text summarization models.

Let’s go through a brief of what the ROUGE score is in NLP. In short, we will compute the ROUGE1, ROUGE2, and ROUGEL metrics. So, what do each of these mean? In very simple words:

- ROUGE1: It is the ratio of the number of words that match the predictions and ground truth to the number of words in the predictions.

- ROUGE2: It is the ratio of the number bi-grams that match in the predictions and the ground truth to the number of bi-grams in the predictions.

- ROUGEL: It is a score defined by the longest matching sequence between the prediction and the ground truth.

Defining the ROUGE metric is quite easy.

rouge = evaluate.load("rouge")

def compute_metrics(eval_pred):

predictions, labels = eval_pred.predictions[0], eval_pred.label_ids

decoded_preds = tokenizer.batch_decode(predictions, skip_special_tokens=True)

labels = np.where(labels != -100, labels, tokenizer.pad_token_id)

decoded_labels = tokenizer.batch_decode(labels, skip_special_tokens=True)

result = rouge.compute(

predictions=decoded_preds,

references=decoded_labels,

use_stemmer=True,

rouge_types=[

'rouge1',

'rouge2',

'rougeL'

]

)

prediction_lens = [np.count_nonzero(pred != tokenizer.pad_token_id) for pred in predictions]

result["gen_len"] = np.mean(prediction_lens)

return {k: round(v, 4) for k, v in result.items()}

We provide the name of the metric (rouge in this case) to the evaluate library. The compute_metrics function is created for use by the Trainer API, which calls it following each evaluation step. You may note that we are passing the ROUGE metrics we want to compute to the compute method.

However, there is one important step before we move to the training phase. Evaluation of the metrics happens on the GPU and at the time of writing this article, there is a possible memory leak in the library. This will cause an OOM error even with 24 GB VRAM GPUs. To mitigate this, we need the following preprocessing function before the metric computation happens.

def preprocess_logits_for_metrics(logits, labels):

"""

Original Trainer may have a memory leak.

This is a workaround to avoid storing too many tensors that are not needed.

"""

pred_ids = torch.argmax(logits[0], dim=-1)

return pred_ids, labels

The solution has been taken from this discussion thread.

Training the Model

To train the text summarization model using T5, we need to define the training arguments and training API.

training_args = TrainingArguments(

output_dir=OUT_DIR,

num_train_epochs=EPOCHS,

per_device_train_batch_size=BATCH_SIZE,

per_device_eval_batch_size=BATCH_SIZE,

warmup_steps=500,

weight_decay=0.01,

logging_dir=OUT_DIR,

logging_steps=10,

evaluation_strategy='steps',

eval_steps=200,

save_strategy='epoch',

save_total_limit=2,

report_to='tensorboard',

learning_rate=0.0001,

dataloader_num_workers=4

)

trainer = Trainer(

model=model,

args=training_args,

train_dataset=tokenized_train,

eval_dataset=tokenized_valid,

preprocess_logits_for_metrics=preprocess_logits_for_metrics,

compute_metrics=compute_metrics

)

history = trainer.train()

The model will be evaluated every 200 steps. Do note that we pass the preprocess_logits_for_metrics and compute_metrics methods to the Trainer API. Most of the processes here are similar to what we did in the previous Hugging Face training articles.

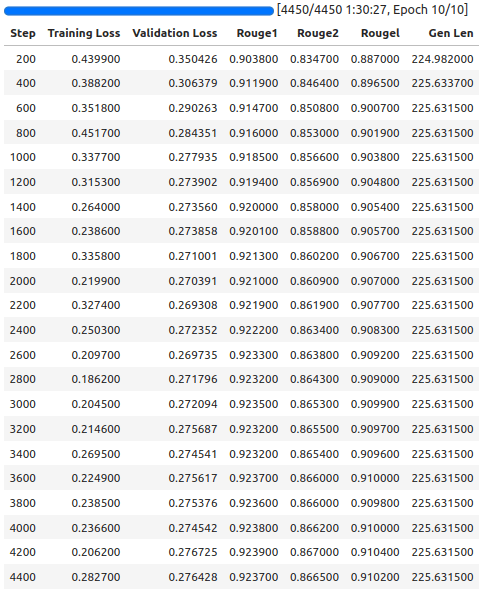

Here are the final training results.

The final evaluation step shows a model with 0.910200 ROUGE score, which is quite good. We will use the model for inference that has been saved after the final epoch.

Text Summarization Inference using the Trained T5 Model

The inference code for text summarization using the T5 model we just trained is very similar to what we did in the pretrained inference section.

The notebook automatically downloads and extracts the inference data. The only thing that changes is how we load the trained models.

model_path = f"{OUT_DIR}/checkpoint-4450" # the path where you saved your model

model = T5ForConditionalGeneration.from_pretrained(model_path)

tokenizer = T5Tokenizer.from_pretrained(OUT_DIR)

Running inference again on the same news article produces the following outputs.

Amazing! The outcomes now are significantly better than before.The model successfully extracts all the keypoints from the articles, and concludes at a point that ensures the summary is cohesive.

This shows the power of fine-tuning the T5 model on task specific summarization datasets.

Building a Gradio App for Text Summarization

Let’s make this process even more interesting. Instead of probing the T5 model for summarization through code, we can do so using a simple UI.

In this section, we will build a simple locally hosted web app using Gradio. All the code for this is present in the app.py script.

Make sure to install Gradio in your current environment before moving further.

pip install gradio

We need just two imports here.

import gradio as gr

from transformers import T5ForConditionalGeneration, T5Tokenizer

We need to define a summarize function that is very similar to what we did above.

def summarize_text(text):

# Preprocess the text

inputs = tokenizer.encode(

"summarize: " + text,

return_tensors='pt',

max_length=512,

truncation=True,

padding='max_length'

)

# Generate the summary

summary_ids = model.generate(

inputs,

max_length=50,

num_beams=5,

# early_stopping=True

)

# Decode and return the summary

return tokenizer.decode(summary_ids[0], skip_special_tokens=True)

Next, load the model.

model_path = 'results_t5base/checkpoint-4450' # the path where you saved your model

model = T5ForConditionalGeneration.from_pretrained(model_path)

tokenizer = T5Tokenizer.from_pretrained('results_t5base')

The summarize function will only be executed once we input some text in a text box and press the Submit button. For that to happen, we need to define a Gradio interface.

interface = gr.Interface(

fn=summarize_text,

inputs=gr.Textbox(lines=10, placeholder='Enter Text Here...', label='Input text'),

outputs=gr.Textbox(label='Summarized Text'),

title='Text Summarizer using T5'

)

interface.launch()

It accepts three necessary components:

fn: It accepts an executable function that will be called when the Submit button is pressed.inputsandoutputs: These Gradio interfaces accept input and show the outputs. Both of these are text boxes in our case.

Finally, the interface.launch() launches a local web app.

We can simply execute the script to start the application.

python app.py

You can open the link provided in the terminal and the application should run in the browser.

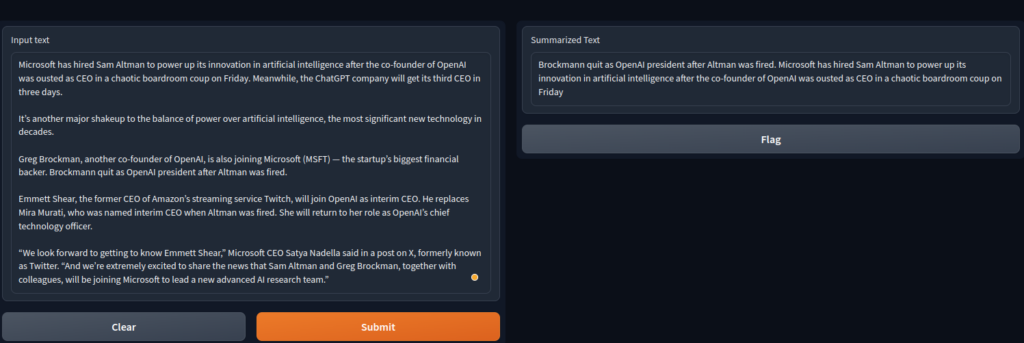

Following is a screenshot of how the interface looks when we input text and get the summarized output from the model.

This shows the endless potential of what we can build using custom models and have fun with them too.

Summary and Conclusion

We went through many concepts and code in this article. We started with a brief description of the need for text summarization models and then moved on to using a pretrained model for summarization. Upon learning its limitations, we trained our own T5 model for summarization. Although on a small dataset, the post-training performance on the model was quite impressive. We did not stop there. Creating a Gradio app showed the possible applications that canbe built using text summarization models.

Did this article intrigue you into building your own text summarization model? If so, what are you going to train the model on? Let us know in the comments.